According to a Guardian investigation, traditional forms of plagiarism have significantly decreased, yet thousands of UK university students have been discovered abusing ChatGPT and other artificial intelligence programs in recent years.

In 2023–2024, there were about 7,000 confirmed occurrences of cheating with AI tools, or 5.1 per 1,000 students, according to a survey on academic integrity violations. Compared to 2022–2023, that was an increase from 1.6 instances per 1,000.

According to data as of May, the rate is expected to rise once more this year to roughly 7.5 proven instances per 1,000 students; however, experts say that recorded cases are merely the beginning.

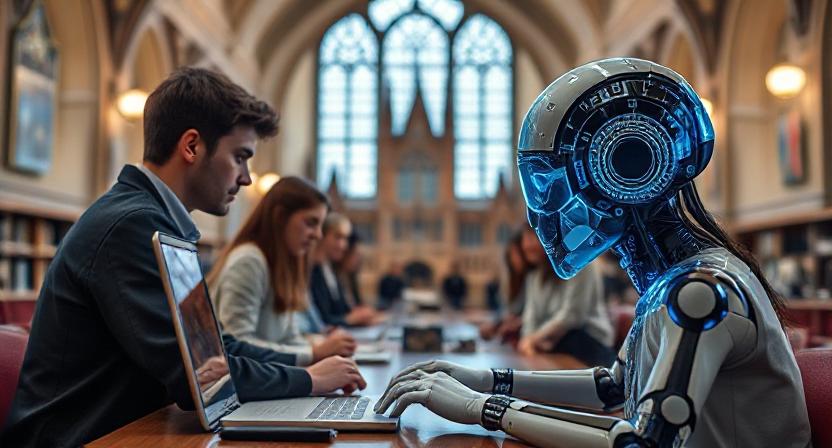

The information reveals a quickly changing issue facing colleges: attempting to modify evaluation procedures in light of the introduction of ChatGPT and other AI-powered writing tools.

Prior to generative AI becoming widely available, plagiarism made up around two-thirds of all academic misbehaviour in 2019–20. Plagiarism increased during the pandemic as more tests were conducted online. However, the nature of cheating has evolved as AI technologies have grown more advanced and widely available.

According to early data from this academic year, confirmed cases of classic plagiarism decreased from 19 per 1,000 students to 15.2 in 2023–2024 and are predicted to decline once more to roughly 8.5 per 1,000.

In order to obtain statistics on confirmed instances of academic misconduct, plagiarism, and AI misconduct throughout the previous five years, The Guardian reached out to 155 universities via the Freedom of Information Act. Although not all universities have records for every year or type of infraction, 131 of them had some information.

In 2023–2024, over 27% of responding colleges did not yet classify AI misuse as a distinct type of wrongdoing, indicating that the industry is still grappling with the problem.

It’s possible that many more instances of AI cheating are going unnoticed. 88% of students used AI for exams, according to a February poll conducted by the Higher Education Policy Institute. When University of Reading researchers tested their own assessment systems last year, they were able to submit AI-generated work 94% of the time without being identified.

Although there have always been methods to cheat, Dr. Peter Scarfe, an associate professor of psychology at the University of Reading and co-author of that study, stated that the education industry would need to adjust to AI, which presented a radically different challenge.

“I would assume those caught represent the tip of the iceberg,” he stated. AI detection differs greatly from plagiarism detection, which verifies the duplicated text. Therefore, regardless of the percentage AI that your AI detector (assuming you use one) indicates, it is nearly impossible to verify the usage of AI in a circumstance where you suspect it. Alongside this is the desire to avoid making unfounded accusations against pupils.

“Merely transferring all of a student’s assessments to in-person is not viable. However, the industry must also recognise that students will use AI even if they are urged not to and that this use will go unnoticed.

There is a wealth of online content available to students who want to use generative AI to cheat covertly: the Guardian discovered dozens of TikTok videos promoting essay writing and AI paraphrasing tools to pupils. Through the “humanisation” of ChatGPT-generated text, these technologies assist students in evading typical academic AI detectors.

“When used properly and by a student who knows how to edit the output, AI misuse is very hard to prove,” stated Dr. Thomas Lancaster, an academic integrity researcher at Imperial College London. I’m hoping that this technique is still helping the students learn.

Harvey* recently completed his last year of a business management program at a university in northern England. He told the Guardian that the majority of individuals he knew used AI to some degree and that he had used it to come up with concepts and structure for assignments as well as to provide references.

“ChatGPT has always been there for me, as it kind of came along when I first started university,” he remarked. I don’t believe that many people use AI to duplicate it verbatim; instead, I believe that it’s more often used to generate ideas and brainstorm. I would then radically alter anything I learnt from it in my own unique manner.

“I do know someone who has used it and then other AI techniques where you can modify and humanise it to write AI content that sounds like it was created by a human.”

At a university in the southwest, Amelia* recently completed her first year of a degree program in music business. She added that she had also used AI for brainstorming and summarising, but that individuals with learning disabilities had found the tools most helpful. One of my acquaintances uses it to add her own ideas and organise them rather than to write any of her articles or conduct research. She claims that having dyslexia helps her a lot.

Peter Kyle, the secretary of science and technology, recently told the Guardian that AI should be used to “level up” possibilities for kids with dyslexia.

Students seem to be a primary target market for AI solutions from tech businesses. OpenAI gives US and Canadian college students discounts, and Google gives university students a free 15-month upgrade of its Gemini tool.

According to Lancaster, “even though we as educators have good reason for setting this, students may occasionally feel that university-level assessments are pointless.” It all boils down to involving students more actively in the assessment design process and assisting them in comprehending the rationale behind the assignments they must perform.

It’s frequently suggested that we should replace written evaluations with more tests, although the benefits of rote learning and retained knowledge are declining annually. I believe it’s critical that we emphasise abilities that AI can’t readily replace, like people skills, communication skills, and empowering students to use emerging technology and thrive in the job.

According to a government spokeswoman, the government has released guidelines on the use of AI in schools and is investing over £187 million in national skills programs.

“With our change plan, generative AI offers exciting opportunities for growth and has great potential to transform education,” they stated. However, integrating AI into teaching, learning and assessment will take careful study and institutions must identify how to harness the benefits and limit the risks to educate students for the occupations of the future.”

*Names are now different.